This post is a discussion and thoughts on using MobBob with other bodies.

Cliff posted the following question in the comments section:

Dear Cervinus;

I am developing a robot based off of the poppy robot chassis. I am not using the expensive dynamixel motors and am beating my head against the wall trying to learn programing. Sort of cart before the horse issues mostly. I’m switching gears and going to build a simpler biped to learn more.

I like the autonomous aspects of your robot and the voice recognition, vision from camera and usage of smartphone sensors etc in your app. Granted this is an as I learn more question can the app be modified to add more leg servos and arms gripper? Is that all in the arduino code or the android app as well?

Awesome project can’t watch to get started and have my Samsung s4 dance around on the table.

Thanks

Cliff

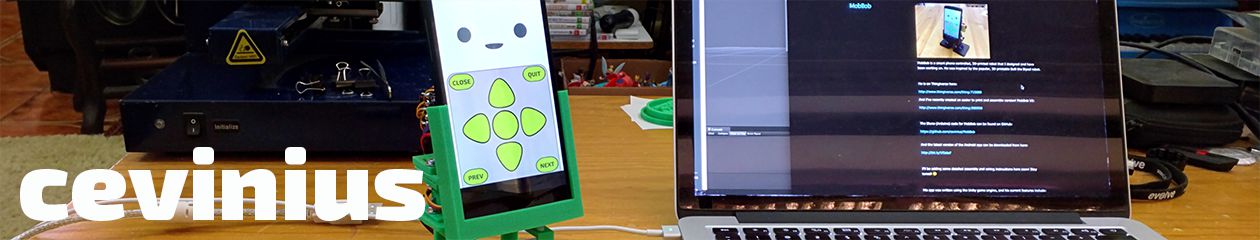

I started writing a reply in the comments section… but it got fairly long and I started including some information that might be useful for other people who may be thinking about other bodies for MobBob. (E.g. Earlier in the week, I saw a MobBob remix on Thingiverse (created by user Zalophus) with arms!)

So, I decided to turn the answer into a post.

Hi Cliff,

If you are learning programming at the same time, I think a simpler biped is a good choice. I think getting Poppy to walk and balance will be very challenging. (It seems like Poppy’s developers are continuing to work on it too.) A simpler biped like MobBob still takes fair amount of programming, but at least you don’t need to worry about balance that much. It’s mostly about smooth motions.

The way I have divided the code up in MobBob is as follows.

The Arduino receives “high level” commands from the app and handles all the details of movement. For example, its command set includes things like “Take a step forward”, “Take a step to the left”, “Bounce up and down, three times”, etc. The app sends these commands to the arduino, and the Arduino code will smoothly drive the servos to do that animation/action.

(Note: The Arduino code does have a command for moving the servos to specific positions. So in theory, an app could make MobBob move in new sequences that are not in the Arduino code’s command set. However, I think this is a harder way to do things unless the app specifically requires dynamic movement sequences that are composed at run time.)

Because the Arduino code handles the details of movement (e.g. how to move the legs to take a step), the app doesn’t need to worry about those details and can focus on interactions with the user. The app’s job is to handle the vision, voice recognition, touch screen interactions. It then sends commands to the Arduino to get MobBob to move when needed.

(Note: It is also possible for the app to request the Arduino to send back sensor readings (e.g. for a distance sensor connected to the Arduino), but I’m not using this currently.)

This is not the only way to divide up the responsibilities between Arduino and App, but I’ve chosen this way for MobBob as I wanted to make it easier/quicker for people to develop new MobBob apps.

With this architecture, people can develop a new app for MobBob without worrying about the details of how to get MobBob to walk, etc. The app developer only need to focus on AI in the app and how the app should interact with the user.

So… with that background information… I’ll return to your question…

Theoretically, if you have a different bipedal body (e.g. more joints on the legs, moving arms, etc), you could write new Arduino code which understands the same command set as MobBob. (E.g. take a step forward, bounce up and down, etc) If you then use the existing app, when the app sends the Arduino command to take a step forward, the Arduino can interpret that command in its own way and take the step in the way that’s suitable for its new body.

This means it should be possible to use the existing app with other bodies.

If the arm movements are purely for fun, they could be included in some of the existing commands. E.g. the command to “Bounce up and down” can now make him also move his arms up and down at the same time as his legs.

If there is a need for new, specific arm movements (e.g. do a grabbing motion), then the app will need to be updated as it doesn’t currently know about that command.

Of course, when building robots, there are always hiccups… different bodies means different durations for steps, turning different amounts, etc… So, some tweaks to the app will probably be needed…

In my grand vision of “How Cevinius Takes Over the World”… I would like something akin to the MobBob command set and the way of transmitting them (e.g. Bluetooth) to become some sort of “standard”. Then people could create a bunch of different bodies that can understand those commands, and create a bunch of apps that drive the bodies using those commands… With a standard command set in place, these different Apps and Bodies should mix and play together mostly.

It would allow robotics-oriented people to focus on creating cool new bodies (and write Arduino code to drive those).

It would let AI/game/application-oriented people focus on creating fun, awesome apps for robots.

With a standard in place… you could potentially use an app created by one developer together with the body created by another developer.

However as I said before, there will no doubt be hiccups (i.e. problems to solve) before we can have this Utopia where installing a new robotics apps onto your custom robot is no harder than installing a new phone app onto your specific-model phone… but I think working towards something like this would be fantastic.

Sorry for the long rant… I hope that answers your question? If not, post below and I’ll try again!

Cevinius

Could you share the app code with us?

Would be a way to evolve the program.

Put on an OpenGL or MIT license, this way we will always have you as our master! LOL..

What do you think about the idea?

poderia compartilhar o codigo do app conosco?

seria uma maneira de evoluir o programa.

Ponha numa licença OpenGL ou MIT, desta maneira sempre teremos você como nosso mestre! rs..

O que acha da ideia?

Hi Diogenes,

Thanks for your offer to help with a Portuguese translation, and helping to further the project! Unfortunately, I’m not able to share the current version of the app. It contains some proprietary code from 3rd parties and from my work that I’m not allowed to share (or make open source).

However, it would be fantastic to create an open source version that people can grow and develop. I’m thinking of starting a new app that can be open source. That way, there can be translations and extensions, and I can also try out any additions that other people make!

I’ve been completely tied up with work for pretty much the past year!! So unfortunately, I don’t have time to do this yet. However, I think it would be fantastic to create an open source version of the app.

Sorry I can’t help for now. Thank you again for writing in and for your interest in my project! 😀

Kevin

It’s been a while since you don’t receive any questions here but I hope you can answer me. The app is no longer in Play Store or is not available in my country, can you upload it somewhere else like MEGA so I can download it?